Karpener を試してみるメモ。

公式には Getting Started with Terraform もあるが、eksctl での Getting Started に沿ってやってみる。

| コンポーネント | バージョン | 備考 |

|---|---|---|

| eksctl | 0.79.0 | |

| Kubernetes バージョン | 1.21 | |

| プラットフォームのバージョン | eks.4 | |

| Karpenter | 0.5.4 | |

| Karpenter チャート | 0.5.4 |

準備

Karpenter を利用するための準備をする。

クラスターの作成

クラスターを作成する。MNG も作っている。

export CLUSTER_NAME=karpenter-demo

export AWS_DEFAULT_REGION=ap-northeast-1

AWS_ACCOUNT_ID=$(aws sts get-caller-identity --query Account --output text)

cat <<EOF > cluster.yaml

---

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: ${CLUSTER_NAME}

region: ${AWS_DEFAULT_REGION}

version: "1.21"

managedNodeGroups:

- instanceType: m5.large

amiFamily: AmazonLinux2

name: ${CLUSTER_NAME}-ng

desiredCapacity: 1

minSize: 1

maxSize: 10

iam:

withOIDC: true

EOF

eksctl create cluster -f cluster.yaml

サブネットへのタグ付け

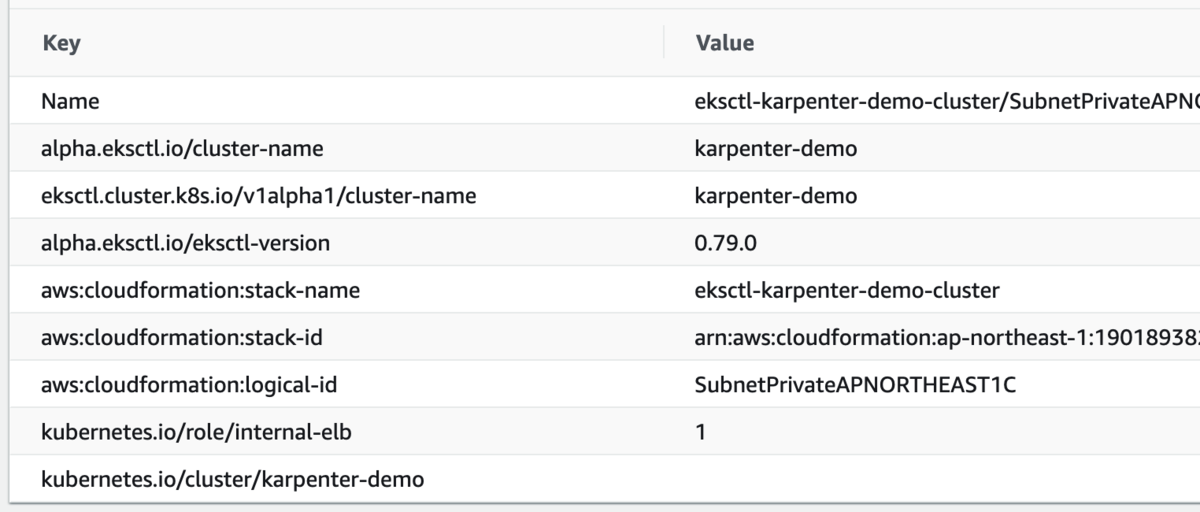

Kaerpenter がサブネットを見つけるのにタグが必要なのでタグ付けする。キーが決まっていて値は何でもよさそう。

SUBNET_IDS=$(aws cloudformation describe-stacks \

--stack-name eksctl-${CLUSTER_NAME}-cluster \

--query 'Stacks[].Outputs[?OutputKey==`SubnetsPrivate`].OutputValue' \

--output text)

aws ec2 create-tags \

--resources $(echo $SUBNET_IDS | tr ',' '\n') \

--tags Key="kubernetes.io/cluster/${CLUSTER_NAME}",Value=

一番下が追加したタグで、プライベートサブネットにのみ追加している。

IAM ロールの作成

IAM ロールを作成する。この CloudFormation では、ノード用の IAM ロールと、Karpenter コントローラー用の IAM ポリシーを作っている。ノード用のロールとは、Karpenter がプロビジョニングするノードに付与するロール。

TEMPOUT=$(mktemp)

curl -fsSL https://karpenter.sh/docs/getting-started/cloudformation.yaml > $TEMPOUT \

&& aws cloudformation deploy \

--stack-name Karpenter-${CLUSTER_NAME} \

--template-file ${TEMPOUT} \

--capabilities CAPABILITY_NAMED_IAM \

--parameter-overrides ClusterName=${CLUSTER_NAME}

ノードに Kubernetes への権限を与えるための aws-auth ConfigMap を変更する。

eksctl create iamidentitymapping \

--username system:node:{{EC2PrivateDNSName}} \

--cluster ${CLUSTER_NAME} \

--arn arn:aws:iam::${AWS_ACCOUNT_ID}:role/KarpenterNodeRole-${CLUSTER_NAME} \

--group system:bootstrappers \

--group system:nodes

確認する。

$ k -n kube-system get cm aws-auth -oyaml

apiVersion: v1

data:

mapRoles: |

- groups:

- system:bootstrappers

- system:nodes

rolearn: arn:aws:iam::XXXXXXXXXXXX:role/eksctl-karpenter-demo-nodegroup-k-NodeInstanceRole-1C7PSQCKU617K

username: system:node:{{EC2PrivateDNSName}}

- groups:

- system:bootstrappers

- system:nodes

rolearn: arn:aws:iam::XXXXXXXXXXXX:role/KarpenterNodeRole-karpenter-demo

username: system:node:{{EC2PrivateDNSName}}

mapUsers: |

[]

kind: ConfigMap

metadata:

creationTimestamp: "2022-01-16T20:41:52Z"

name: aws-auth

namespace: kube-system

resourceVersion: "11499"

uid: fe62e41b-95f6-47a7-b6ae-c57458cb8640

IRSA でコントローラーに付与する IAM ロールを作成する。

eksctl create iamserviceaccount \ --cluster $CLUSTER_NAME --name karpenter --namespace karpenter \ --attach-policy-arn arn:aws:iam::$AWS_ACCOUNT_ID:policy/KarpenterControllerPolicy-$CLUSTER_NAME \ --approve

EC2 スポット用の Service Linked ロールを作成する。これはアカウントで 1 回だけ必要。エラーになったので、作成済みだった。

aws iam create-service-linked-role --aws-service-name spot.amazonaws.com

Karpenter のデプロイ

Helm チャートリポジトリを登録する。

helm repo add karpenter https://charts.karpenter.sh helm repo update

チャートを確認する。

$ helm search repo karpenter NAME CHART VERSION APP VERSION DESCRIPTION karpenter/karpenter 0.5.4 0.5.4 A Helm chart for https://github.com/aws/karpent...

Karpenter をインストールする。

$ helm upgrade --install karpenter karpenter/karpenter --namespace karpenter \

--create-namespace --set serviceAccount.create=false --version 0.5.4 \

--set controller.clusterName=${CLUSTER_NAME} \

--set controller.clusterEndpoint=$(aws eks describe-cluster --name ${CLUSTER_NAME} --query "cluster.endpoint" --output json) \

--wait

Release "karpenter" does not exist. Installing it now.

NAME: karpenter

LAST DEPLOYED: Tue Jan 18 05:11:39 2022

NAMESPACE: karpenter

STATUS: deployed

REVISION: 1

TEST SUITE: None

Pod を確認する。

$ k -n karpenter get po NAME READY STATUS RESTARTS AGE karpenter-controller-7b77564cb8-f9gcg 1/1 Running 0 105s karpenter-webhook-7d847d4df5-t8jb4 1/1 Running 0 105s

(オプション)デバッグログを有効にする。

kubectl patch configmap config-logging -n karpenter --patch '{"data":{"loglevel.controller":"debug"}}'

Prometheus と Grafana のインストール(オプション)

チャートリポジトリを登録する。

helm repo add grafana-charts https://grafana.github.io/helm-charts helm repo add prometheus-community https://prometheus-community.github.io/helm-charts helm repo update

チャートを確認する。

$ helm search repo prometheus-community/prometheus NAME CHART VERSION APP VERSION DESCRIPTION prometheus-community/prometheus 15.0.4 2.31.1 Prometheus is a monitoring system and time seri... prometheus-community/prometheus-adapter 3.0.1 v0.9.1 A Helm chart for k8s prometheus adapter prometheus-community/prometheus-blackbox-exporter 5.3.1 0.19.0 Prometheus Blackbox Exporter prometheus-community/prometheus-cloudwatch-expo... 0.18.0 0.12.2 A Helm chart for prometheus cloudwatch-exporter prometheus-community/prometheus-consul-exporter 0.5.0 0.4.0 A Helm chart for the Prometheus Consul Exporter prometheus-community/prometheus-couchdb-exporter 0.2.0 1.0 A Helm chart to export the metrics from couchdb... prometheus-community/prometheus-druid-exporter 0.11.0 v0.8.0 Druid exporter to monitor druid metrics with Pr... prometheus-community/prometheus-elasticsearch-e... 4.11.0 1.3.0 Elasticsearch stats exporter for Prometheus prometheus-community/prometheus-json-exporter 0.1.0 1.0.2 Install prometheus-json-exporter prometheus-community/prometheus-kafka-exporter 1.5.0 v1.4.1 A Helm chart to export the metrics from Kafka i... prometheus-community/prometheus-mongodb-exporter 2.9.0 v0.10.0 A Prometheus exporter for MongoDB metrics prometheus-community/prometheus-mysql-exporter 1.5.0 v0.12.1 A Helm chart for prometheus mysql exporter with... prometheus-community/prometheus-nats-exporter 2.9.0 0.9.0 A Helm chart for prometheus-nats-exporter prometheus-community/prometheus-node-exporter 2.5.0 1.3.1 A Helm chart for prometheus node-exporter prometheus-community/prometheus-operator 9.3.2 0.38.1 DEPRECATED - This chart will be renamed. See ht... prometheus-community/prometheus-pingdom-exporter 2.4.1 20190610-1 A Helm chart for Prometheus Pingdom Exporter prometheus-community/prometheus-postgres-exporter 2.4.0 0.10.0 A Helm chart for prometheus postgres-exporter prometheus-community/prometheus-pushgateway 1.14.0 1.4.2 A Helm chart for prometheus pushgateway prometheus-community/prometheus-rabbitmq-exporter 1.0.0 v0.29.0 Rabbitmq metrics exporter for prometheus prometheus-community/prometheus-redis-exporter 4.6.0 1.27.0 Prometheus exporter for Redis metrics prometheus-community/prometheus-snmp-exporter 0.1.5 0.19.0 Prometheus SNMP Exporter prometheus-community/prometheus-stackdriver-exp... 1.12.0 0.11.0 Stackdriver exporter for Prometheus prometheus-community/prometheus-statsd-exporter 0.4.2 0.22.1 A Helm chart for prometheus stats-exporter prometheus-community/prometheus-to-sd 0.4.0 0.5.2 Scrape metrics stored in prometheus format and ... $ helm search repo grafana-charts/grafana NAME CHART VERSION APP VERSION DESCRIPTION grafana-charts/grafana 6.20.5 8.3.4 The leading tool for querying and visualizing t... grafana-charts/grafana-agent-operator 0.1.4 0.20.0 A Helm chart for Grafana Agent Operator

Namespace を作成する。

$ kubectl create namespace monitoring namespace/monitoring created

Prometheus をデプロイする。

$ curl -fsSL https://karpenter.sh/docs/getting-started/prometheus-values.yaml | tee prometheus-values.yaml

alertmanager:

persistentVolume:

enabled: false

server:

fullnameOverride: prometheus-server

persistentVolume:

enabled: false

extraScrapeConfigs: |

- job_name: karpenter

static_configs:

- targets:

- karpenter-metrics.karpenter:8080

$ helm install --namespace monitoring prometheus prometheus-community/prometheus --values prometheus-values.yaml

NAME: prometheus

LAST DEPLOYED: Tue Jan 18 05:20:58 2022

NAMESPACE: monitoring

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

The Prometheus server can be accessed via port 80 on the following DNS name from within your cluster:

prometheus-server.monitoring.svc.cluster.local

Get the Prometheus server URL by running these commands in the same shell:

export POD_NAME=$(kubectl get pods --namespace monitoring -l "app=prometheus,component=server" -o jsonpath="{.items[0].metadata.name}")

kubectl --namespace monitoring port-forward $POD_NAME 9090

#################################################################################

###### WARNING: Persistence is disabled!!! You will lose your data when #####

###### the Server pod is terminated. #####

#################################################################################

The Prometheus alertmanager can be accessed via port 80 on the following DNS name from within your cluster:

prometheus-alertmanager.monitoring.svc.cluster.local

Get the Alertmanager URL by running these commands in the same shell:

export POD_NAME=$(kubectl get pods --namespace monitoring -l "app=prometheus,component=alertmanager" -o jsonpath="{.items[0].metadata.name}")

kubectl --namespace monitoring port-forward $POD_NAME 9093

#################################################################################

###### WARNING: Persistence is disabled!!! You will lose your data when #####

###### the AlertManager pod is terminated. #####

#################################################################################

#################################################################################

###### WARNING: Pod Security Policy has been moved to a global property. #####

###### use .Values.podSecurityPolicy.enabled with pod-based #####

###### annotations #####

###### (e.g. .Values.nodeExporter.podSecurityPolicy.annotations) #####

#################################################################################

The Prometheus PushGateway can be accessed via port 9091 on the following DNS name from within your cluster:

prometheus-pushgateway.monitoring.svc.cluster.local

Get the PushGateway URL by running these commands in the same shell:

export POD_NAME=$(kubectl get pods --namespace monitoring -l "app=prometheus,component=pushgateway" -o jsonpath="{.items[0].metadata.name}")

kubectl --namespace monitoring port-forward $POD_NAME 9091

For more information on running Prometheus, visit:

https://prometheus.io/

Grafana をデプロイする。

$ curl -fsSL https://karpenter.sh/docs/getting-started/grafana-values.yaml | tee grafana-values.yaml

datasources:

datasources.yaml:

apiVersion: 1

datasources:

- name: Prometheus

type: prometheus

version: 1

url: http://prometheus-server:80

access: proxy

$ helm install --namespace monitoring grafana grafana-charts/grafana --values grafana-values.yaml

W0118 05:22:13.264815 16206 warnings.go:70] policy/v1beta1 PodSecurityPolicy is deprecated in v1.21+, unavailable in v1.25+

W0118 05:22:14.344142 16206 warnings.go:70] policy/v1beta1 PodSecurityPolicy is deprecated in v1.21+, unavailable in v1.25+

W0118 05:22:26.410996 16206 warnings.go:70] policy/v1beta1 PodSecurityPolicy is deprecated in v1.21+, unavailable in v1.25+

W0118 05:22:26.411453 16206 warnings.go:70] policy/v1beta1 PodSecurityPolicy is deprecated in v1.21+, unavailable in v1.25+

NAME: grafana

LAST DEPLOYED: Tue Jan 18 05:22:06 2022

NAMESPACE: monitoring

STATUS: deployed

REVISION: 1

NOTES:

1. Get your 'admin' user password by running:

kubectl get secret --namespace monitoring grafana -o jsonpath="{.data.admin-password}" | base64 --decode ; echo

2. The Grafana server can be accessed via port 80 on the following DNS name from within your cluster:

grafana.monitoring.svc.cluster.local

Get the Grafana URL to visit by running these commands in the same shell:

export POD_NAME=$(kubectl get pods --namespace monitoring -l "app.kubernetes.io/name=grafana,app.kubernetes.io/instance=grafana" -o jsonpath="{.items[0].metadata.name}")

kubectl --namespace monitoring port-forward $POD_NAME 3000

3. Login with the password from step 1 and the username: admin

#################################################################################

###### WARNING: Persistence is disabled!!! You will lose your data when #####

###### the Grafana pod is terminated. #####

#################################################################################

Pod を確認する。

$ k -n monitoring get po NAME READY STATUS RESTARTS AGE grafana-859fcfbbfc-bkmrx 1/1 Running 0 42s prometheus-alertmanager-74cb99ff8-sdsvp 2/2 Running 0 106s prometheus-kube-state-metrics-68b6c8b5c5-78967 1/1 Running 0 106s prometheus-node-exporter-wqp9k 1/1 Running 0 106s prometheus-pushgateway-7ff8d6b8c4-cbd7k 1/1 Running 0 106s prometheus-server-74d79774cd-mccv9 2/2 Running 0 106s

Grafana のパスワードを取得する。

kubectl get secret --namespace monitoring grafana -o jsonpath="{.data.admin-password}" | base64 --decode

Grafana にはポートフォワードでアクセスする。

kubectl port-forward --namespace monitoring svc/grafana 3000:80

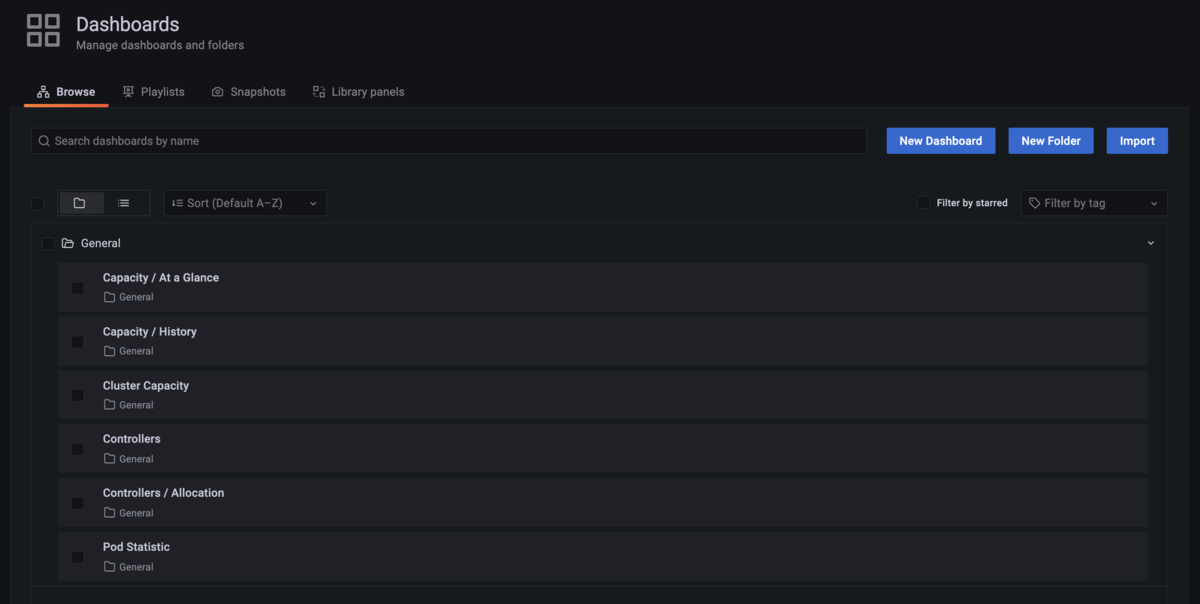

ダッシュボードをインポートしておく。

Provisioner の作成

デフォルトの Provisioner を作成する。

$ cat <<EOF | kubectl apply -f -

apiVersion: karpenter.sh/v1alpha5

kind: Provisioner

metadata:

name: default

spec:

requirements:

- key: karpenter.sh/capacity-type

operator: In

values: ["spot"]

limits:

resources:

cpu: 1000

provider:

instanceProfile: KarpenterNodeInstanceProfile-${CLUSTER_NAME}

ttlSecondsAfterEmpty: 30

EOF

provisioner.karpenter.sh/default created

確認する。

$ k get Provisioner NAME AGE default 21s

Karpenter の利用

pause イメージで CPU 1 を要求する Pod の Deployment をレプリカ 1 で作成する。

$ cat <<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: inflate

spec:

replicas: 0

selector:

matchLabels:

app: inflate

template:

metadata:

labels:

app: inflate

spec:

terminationGracePeriodSeconds: 0

containers:

- name: inflate

image: public.ecr.aws/eks-distro/kubernetes/pause:3.2

resources:

requests:

cpu: 1

EOF

deployment.apps/inflate created

Deployment をスケールする。

$ kubectl scale deployment inflate --replicas 5 deployment.apps/inflate scaled

コントローラーのログを確認する。

$ kubectl logs -f -n karpenter $(kubectl get pods -n karpenter -l karpenter=controller -o name)

2022-01-17T20:12:07.548Z INFO Successfully created the logger.

2022-01-17T20:12:07.548Z INFO Logging level set to: info

{"level":"info","ts":1642450327.6526809,"logger":"fallback","caller":"injection/injection.go:61","msg":"Starting informers..."}

I0117 20:12:07.670361 1 leaderelection.go:243] attempting to acquire leader lease karpenter/karpenter-leader-election...

2022-01-17T20:12:07.670Z INFO controller starting metrics server {"commit": "7e79a67", "path": "/metrics"}

I0117 20:12:07.715692 1 leaderelection.go:253] successfully acquired lease karpenter/karpenter-leader-election

2022-01-17T20:12:07.771Z INFO controller.controller.counter Starting EventSource {"commit": "7e79a67", "reconciler group": "karpenter.sh", "reconciler kind": "Provisioner", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.771Z INFO controller.controller.counter Starting EventSource {"commit": "7e79a67", "reconciler group": "karpenter.sh", "reconciler kind": "Provisioner", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.771Z INFO controller.controller.counter Starting Controller {"commit": "7e79a67", "reconciler group": "karpenter.sh", "reconciler kind": "Provisioner"}

2022-01-17T20:12:07.772Z INFO controller.controller.provisioning Starting EventSource {"commit": "7e79a67", "reconciler group": "karpenter.sh", "reconciler kind": "Provisioner", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.772Z INFO controller.controller.provisioning Starting Controller {"commit": "7e79a67", "reconciler group": "karpenter.sh", "reconciler kind": "Provisioner"}

2022-01-17T20:12:07.772Z INFO controller.controller.volume Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "PersistentVolumeClaim", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.772Z INFO controller.controller.volume Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "PersistentVolumeClaim", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.772Z INFO controller.controller.volume Starting Controller {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "PersistentVolumeClaim"}

2022-01-17T20:12:07.772Z INFO controller.controller.termination Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.772Z INFO controller.controller.termination Starting Controller {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node"}

2022-01-17T20:12:07.773Z INFO controller.controller.node Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.773Z INFO controller.controller.node Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.773Z INFO controller.controller.node Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.773Z INFO controller.controller.node Starting Controller {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node"}

2022-01-17T20:12:07.773Z INFO controller.controller.podmetrics Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Pod", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.773Z INFO controller.controller.podmetrics Starting Controller {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Pod"}

2022-01-17T20:12:07.773Z INFO controller.controller.nodemetrics Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.773Z INFO controller.controller.nodemetrics Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.773Z INFO controller.controller.nodemetrics Starting EventSource {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "source": "kind source: /, Kind="}

2022-01-17T20:12:07.773Z INFO controller.controller.nodemetrics Starting Controller {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node"}

2022-01-17T20:12:07.872Z INFO controller.controller.provisioning Starting workers {"commit": "7e79a67", "reconciler group": "karpenter.sh", "reconciler kind": "Provisioner", "worker count": 10}

2022-01-17T20:12:07.873Z INFO controller.controller.termination Starting workers {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "worker count": 10}

2022-01-17T20:12:07.873Z INFO controller.controller.counter Starting workers {"commit": "7e79a67", "reconciler group": "karpenter.sh", "reconciler kind": "Provisioner", "worker count": 10}

2022-01-17T20:12:07.873Z INFO controller.controller.volume Starting workers {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "PersistentVolumeClaim", "worker count": 1}

2022-01-17T20:12:07.888Z INFO controller.controller.podmetrics Starting workers {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Pod", "worker count": 1}

2022-01-17T20:12:07.891Z INFO controller.controller.node Starting workers {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "worker count": 10}

2022-01-17T20:12:07.891Z INFO controller.controller.nodemetrics Starting workers {"commit": "7e79a67", "reconciler group": "", "reconciler kind": "Node", "worker count": 1}

2022-01-17T20:15:12.525Z INFO controller Updating logging level for controller from info to debug. {"commit": "7e79a67"}

2022-01-17T20:35:26.973Z DEBUG controller.provisioning Discovered 324 EC2 instance types {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:35:27.216Z DEBUG controller.provisioning Discovered subnets: [subnet-021d3b8da085b55a9 (ap-northeast-1c) subnet-02e70ec727156b94f (ap-northeast-1d) subnet-0d529a446897f24a2 (ap-northeast-1a)] {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:35:27.336Z DEBUG controller.provisioning Discovered EC2 instance types zonal offerings {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:35:27.341Z INFO controller.provisioning Waiting for unschedulable pods {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:40:28.066Z DEBUG controller.provisioning Discovered 324 EC2 instance types {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:40:28.287Z DEBUG controller.provisioning Discovered subnets: [subnet-021d3b8da085b55a9 (ap-northeast-1c) subnet-02e70ec727156b94f (ap-northeast-1d) subnet-0d529a446897f24a2 (ap-northeast-1a)] {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:40:28.406Z DEBUG controller.provisioning Discovered EC2 instance types zonal offerings {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:56.769Z INFO controller.provisioning Batched 5 pods in 1.080460937s {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:56.852Z DEBUG controller.provisioning Discovered subnets: [subnet-021d3b8da085b55a9 (ap-northeast-1c) subnet-02e70ec727156b94f (ap-northeast-1d) subnet-0d529a446897f24a2 (ap-northeast-1a)] {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:56.967Z DEBUG controller.provisioning Excluding instance type t3a.nano because there are not enough resources for kubelet and system overhead {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:56.974Z DEBUG controller.provisioning Excluding instance type t3.nano because there are not enough resources for kubelet and system overhead {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:56.979Z INFO controller.provisioning Computed packing of 1 node(s) for 5 pod(s) with instance type option(s) [c1.xlarge c4.2xlarge c3.2xlarge c5a.2xlarge c5.2xlarge c6i.2xlarge c5d.2xlarge c5n.2xlarge m3.2xlarge m5n.2xlarge m6i.2xlarge m5zn.2xlarge t3.2xlarge m5d.2xlarge m5a.2xlarge m5.2xlarge t3a.2xlarge m5ad.2xlarge m4.2xlarge m5dn.2xlarge] {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:57.138Z DEBUG controller.provisioning Discovered security groups: [sg-0ef398d023a1842ba] {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:57.140Z DEBUG controller.provisioning Discovered kubernetes version 1.21 {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:57.211Z DEBUG controller.provisioning Discovered ami ami-0b7bbd8c99e925a83 for query /aws/service/eks/optimized-ami/1.21/amazon-linux-2/recommended/image_id {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:57.211Z DEBUG controller.provisioning Discovered caBundle, length 1066 {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:41:57.360Z DEBUG controller.provisioning Created launch template, Karpenter-karpenter-demo-17569880151886454907 {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:42:01.109Z INFO controller.provisioning Launched instance: i-0473f37e849fdd8ec, hostname: ip-192-168-133-127.ap-northeast-1.compute.internal, type: t3a.2xlarge, zone: ap-northeast-1d, capacityType: spot {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:42:01.191Z INFO controller.provisioning Bound 5 pod(s) to node ip-192-168-133-127.ap-northeast-1.compute.internal {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T20:42:01.191Z INFO controller.provisioning Waiting for unschedulable pods {"commit": "7e79a67", "provisioner": "default"}

5 つの Pod が収容できるノードがプロビジョニングされた。

$ k get po -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES inflate-6b88c9fb68-7h4vw 1/1 Running 0 2m52s 192.168.128.88 ip-192-168-133-127.ap-northeast-1.compute.internal <none> <none> inflate-6b88c9fb68-c6hmr 1/1 Running 0 2m52s 192.168.159.221 ip-192-168-133-127.ap-northeast-1.compute.internal <none> <none> inflate-6b88c9fb68-cqd9v 1/1 Running 0 2m52s 192.168.137.10 ip-192-168-133-127.ap-northeast-1.compute.internal <none> <none> inflate-6b88c9fb68-ws5jq 1/1 Running 0 2m52s 192.168.159.185 ip-192-168-133-127.ap-northeast-1.compute.internal <none> <none> inflate-6b88c9fb68-xj6pc 1/1 Running 0 2m52s 192.168.137.151 ip-192-168-133-127.ap-northeast-1.compute.internal <none> <none> $ k get node -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME ip-192-168-133-127.ap-northeast-1.compute.internal Ready <none> 3m4s v1.21.5-eks-bc4871b 192.168.133.127 <none> Amazon Linux 2 5.4.162-86.275.amzn2.x86_64 containerd://1.4.6 ip-192-168-72-151.ap-northeast-1.compute.internal Ready <none> 24h v1.21.5-eks-bc4871b 192.168.72.151 54.92.61.102 Amazon Linux 2 5.4.162-86.275.amzn2.x86_64 docker://20.10.7

この時の動きとしては以下のようになっていた。

- Karpenter はさっさとノードの登録と、Pod のスケジューリングを実施

- ノードのステータスは Unknown で、Pod のステータスは Pending でスケジューリング済み

- しばらくしてノードが NotReady になると Pod は ContainerCreating となる

- ノードが Ready になると Pod は Running になる

以下気になった点。

- コンテナランタイムが Containerd ではないのは、Karpanter が起動テンプレートを作っていて、そのユーザーデータで Containerd を指定しているから

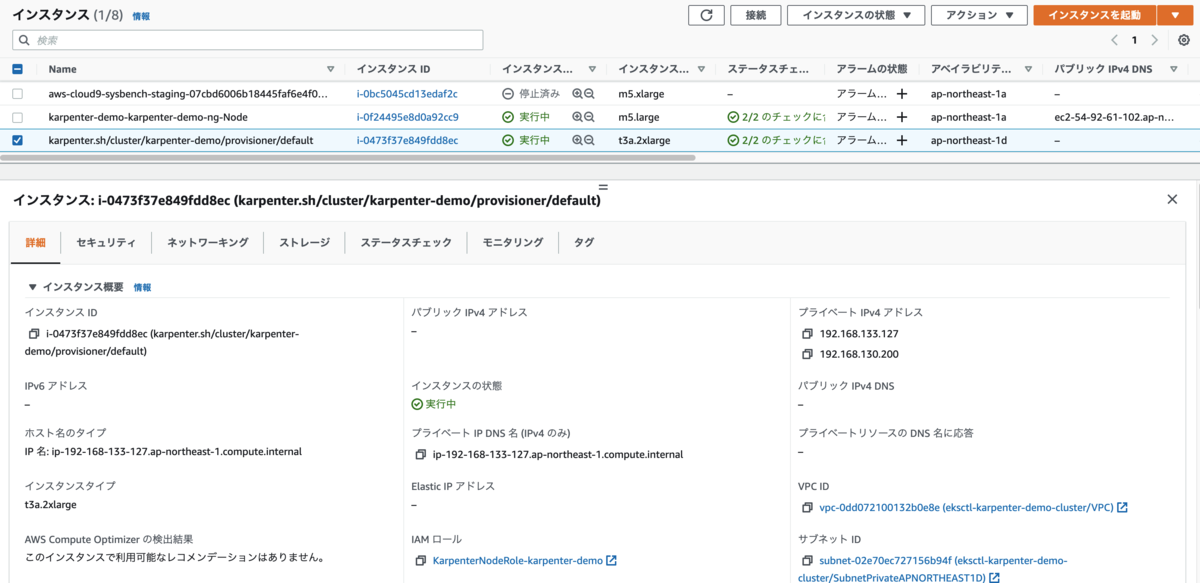

- Public サブネットと、Private サブネットがそれぞれ 3 つの VPC で、MNG は Public サブネットに配置する構成になっていた

- Private サブネットにのみタグ付けしたので、Karpenter は Private サブネットにノードを作成している

EC2 コンソールでインスタンスを確認する。先ほど作成した IAM ロールがアタッチされている。このロールを Provisioner で指定していた。

EC2 Fleet API を利用しているらしいので、describe-fleets してみたが何も表示されなかった。

$ aws ec2 describe-fleets

{

"Fleets": []

}

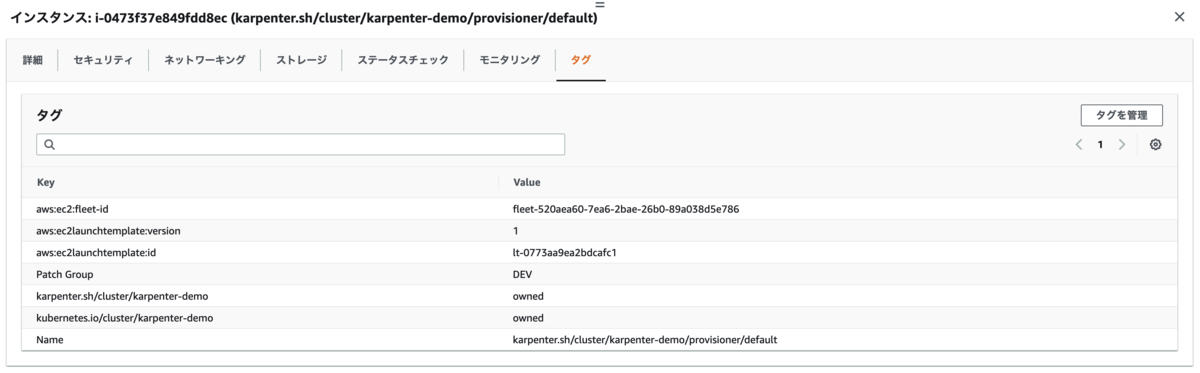

インスタンスのタグには aws:ec2:fleet-id が付与されている。

Provisioner で spot を指定していたので、スポットリクエストを確認したらあった。

$ aws ec2 describe-spot-instance-requests

{

"SpotInstanceRequests": [

{

"CreateTime": "2022-01-17T20:41:59+00:00",

"InstanceId": "i-0473f37e849fdd8ec",

"LaunchSpecification": {

"SecurityGroups": [

{

"GroupName": "eks-cluster-sg-karpenter-demo-619918540",

"GroupId": "sg-0ef398d023a1842ba"

}

],

"IamInstanceProfile": {

"Arn": "arn:aws:iam::XXXXXXXXXXXX:instance-profile/KarpenterNodeInstanceProfile-karpenter-demo",

"Name": "KarpenterNodeInstanceProfile-karpenter-demo"

},

"ImageId": "ami-0b7bbd8c99e925a83",

"InstanceType": "t3a.2xlarge",

"NetworkInterfaces": [

{

"DeleteOnTermination": true,

"DeviceIndex": 0,

"SubnetId": "subnet-02e70ec727156b94f"

}

],

"Placement": {

"AvailabilityZone": "ap-northeast-1d",

"Tenancy": "default"

},

"Monitoring": {

"Enabled": false

}

},

"LaunchedAvailabilityZone": "ap-northeast-1d",

"ProductDescription": "Linux/UNIX",

"SpotInstanceRequestId": "sir-r8268drq",

"SpotPrice": "0.391700",

"State": "active",

"Status": {

"Code": "fulfilled",

"Message": "Your spot request is fulfilled.",

"UpdateTime": "2022-01-17T21:08:39+00:00"

},

"Tags": [],

"Type": "one-time",

"InstanceInterruptionBehavior": "terminate"

}

]

}

以下のコマンドはエラーになった。ID が sir- なので、Spot Fleet Request ではなく、Spot Instance Request なのかと思われる。

- https://docs.aws.amazon.com/cli/latest/reference/ec2/request-spot-fleet.html

- https://docs.aws.amazon.com/cli/latest/reference/ec2/request-spot-instances.html

$ aws ec2 describe-spot-fleet-instances --spot-fleet-request-id sir-r8268drq

An error occurred (InvalidParameterValue) when calling the DescribeSpotFleetInstances operation: 1 validation error detected: Value 'sir-r8268drq' at 'spotFleetRequestId' failed to satisfy constraint: Member must satisfy regular expression pattern: ^(sfr|fleet)-[a-z0-9-]{36}\b

Deployment を削除する。Provisioner で ttlSecondsAfterEmpty: 30 を指定していたので、Pod がいなくなってから 30 秒経つとノードが削除される。

$ kubectl delete deployment inflate deployment.apps "inflate" deleted

ログを確認する。

$ kubectl logs -f -n karpenter $(kubectl get pods -n karpenter -l karpenter=controller -o name)

...

2022-01-17T21:15:35.530Z DEBUG controller.provisioning Discovered 324 EC2 instance types {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:15:35.630Z DEBUG controller.provisioning Discovered subnets: [subnet-021d3b8da085b55a9 (ap-northeast-1c) subnet-02e70ec727156b94f (ap-northeast-1d) subnet-0d529a446897f24a2 (ap-northeast-1a)] {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:15:35.757Z DEBUG controller.provisioning Discovered EC2 instance types zonal offerings {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:20:36.489Z DEBUG controller.provisioning Discovered 324 EC2 instance types {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:20:36.698Z DEBUG controller.provisioning Discovered subnets: [subnet-021d3b8da085b55a9 (ap-northeast-1c) subnet-02e70ec727156b94f (ap-northeast-1d) subnet-0d529a446897f24a2 (ap-northeast-1a)] {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:20:36.818Z DEBUG controller.provisioning Discovered EC2 instance types zonal offerings {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:25:33.626Z INFO controller.node Added TTL to empty node {"commit": "7e79a67", "node": "ip-192-168-133-127.ap-northeast-1.compute.internal"}

2022-01-17T21:25:37.567Z DEBUG controller.provisioning Discovered 324 EC2 instance types {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:25:37.713Z DEBUG controller.provisioning Discovered subnets: [subnet-021d3b8da085b55a9 (ap-northeast-1c) subnet-02e70ec727156b94f (ap-northeast-1d) subnet-0d529a446897f24a2 (ap-northeast-1a)] {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:25:37.845Z DEBUG controller.provisioning Discovered EC2 instance types zonal offerings {"commit": "7e79a67", "provisioner": "default"}

2022-01-17T21:26:03.646Z INFO controller.node Triggering termination after 30s for empty node {"commit": "7e79a67", "node": "ip-192-168-133-127.ap-northeast-1.compute.internal"}

2022-01-17T21:26:03.685Z INFO controller.termination Cordoned node {"commit": "7e79a67", "node": "ip-192-168-133-127.ap-northeast-1.compute.internal"}

2022-01-17T21:26:03.964Z INFO controller.termination Deleted node {"commit": "7e79a67", "node": "ip-192-168-133-127.ap-northeast-1.compute.internal"}

ノードを確認する。削除された。

$ k get node -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME ip-192-168-72-151.ap-northeast-1.compute.internal Ready <none> 24h v1.21.5-eks-bc4871b 192.168.72.151 54.92.61.102 Amazon Linux 2 5.4.162-86.275.amzn2.x86_64 docker://20.10.7

EC2 Fleet API との関連については、describe-fleets で ID を指定してみるとちゃんととれた。Spot Fleet からではなく、EC2 Fleet からスポットインスタンスを起動しているということらしい。

$ aws ec2 describe-fleets --fleet-ids fleet-728040e2-5e26-0924-843a-ab8a64977bca

{

"Fleets": [

{

"ActivityStatus": "fulfilled",

"CreateTime": "2022-01-18T00:48:51+00:00",

"FleetId": "fleet-728040e2-5e26-0924-843a-ab8a64977bca",

"FleetState": "active",

"ClientToken": "EC2Fleet-7b91c7a1-6281-4d8c-a02d-cd930fefe667",

"FulfilledCapacity": 1.0,

"FulfilledOnDemandCapacity": 0.0,

"LaunchTemplateConfigs": [

{

"LaunchTemplateSpecification": {

"LaunchTemplateName": "Karpenter-karpenter-demo-17569880151886454907",

"Version": "$Default"

},

"Overrides": [

{

"InstanceType": "c1.xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 0.0

},

{

"InstanceType": "c1.xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 0.0

},

{

"InstanceType": "c4.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 1.0

},

{

"InstanceType": "c4.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 1.0

},

{

"InstanceType": "c4.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 1.0

},

{

"InstanceType": "c3.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 2.0

},

{

"InstanceType": "c3.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 2.0

},

{

"InstanceType": "c5.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 3.0

},

{

"InstanceType": "c5.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 3.0

},

{

"InstanceType": "c5.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 3.0

},

{

"InstanceType": "c5a.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 4.0

},

{

"InstanceType": "c5a.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 4.0

},

{

"InstanceType": "c5d.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 5.0

},

{

"InstanceType": "c5d.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 5.0

},

{

"InstanceType": "c5d.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 5.0

},

{

"InstanceType": "c6i.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 6.0

},

{

"InstanceType": "c6i.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 6.0

},

{

"InstanceType": "c5n.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 7.0

},

{

"InstanceType": "c5n.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 7.0

},

{

"InstanceType": "c5n.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 7.0

},

{

"InstanceType": "m3.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 8.0

},

{

"InstanceType": "m3.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 8.0

},

{

"InstanceType": "m5n.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 9.0

},

{

"InstanceType": "m5n.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 9.0

},

{

"InstanceType": "m5n.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 9.0

},

{

"InstanceType": "m5dn.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 10.0

},

{

"InstanceType": "m5dn.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 10.0

},

{

"InstanceType": "m5dn.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 10.0

},

{

"InstanceType": "m5.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 11.0

},

{

"InstanceType": "m5.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 11.0

},

{

"InstanceType": "m5.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 11.0

},

{

"InstanceType": "m5a.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 12.0

},

{

"InstanceType": "m5a.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 12.0

},

{

"InstanceType": "m5a.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 12.0

},

{

"InstanceType": "m6i.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 13.0

},

{

"InstanceType": "m6i.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 13.0

},

{

"InstanceType": "m5zn.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 14.0

},

{

"InstanceType": "m5zn.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 14.0

},

{

"InstanceType": "t3.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 15.0

},

{

"InstanceType": "t3.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 15.0

},

{

"InstanceType": "t3.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 15.0

},

{

"InstanceType": "m5ad.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 16.0

},

{

"InstanceType": "m5ad.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 16.0

},

{

"InstanceType": "m5ad.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 16.0

},

{

"InstanceType": "m5d.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 17.0

},

{

"InstanceType": "m5d.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 17.0

},

{

"InstanceType": "m5d.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 17.0

},

{

"InstanceType": "m4.2xlarge",

"SubnetId": "subnet-021d3b8da085b55a9",

"AvailabilityZone": "ap-northeast-1c",

"Priority": 18.0

},

{

"InstanceType": "m4.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 18.0

},

{

"InstanceType": "m4.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 18.0

},

{

"InstanceType": "t3a.2xlarge",

"SubnetId": "subnet-0d529a446897f24a2",

"AvailabilityZone": "ap-northeast-1a",

"Priority": 19.0

},

{

"InstanceType": "t3a.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 19.0

}

]

}

],

"TargetCapacitySpecification": {

"TotalTargetCapacity": 1,

"OnDemandTargetCapacity": 0,

"SpotTargetCapacity": 0,

"DefaultTargetCapacityType": "spot"

},

"TerminateInstancesWithExpiration": false,

"Type": "instant",

"ValidFrom": "1970-01-01T00:00:00+00:00",

"ValidUntil": "1970-01-01T00:00:00+00:00",

"ReplaceUnhealthyInstances": false,

"SpotOptions": {

"AllocationStrategy": "capacity-optimized-prioritized"

},

"OnDemandOptions": {

"AllocationStrategy": "lowest-price"

},

"Errors": [],

"Instances": [

{

"LaunchTemplateAndOverrides": {

"LaunchTemplateSpecification": {

"LaunchTemplateId": "lt-0773aa9ea2bdcafc1",

"Version": "1"

},

"Overrides": {

"InstanceType": "t3a.2xlarge",

"SubnetId": "subnet-02e70ec727156b94f",

"AvailabilityZone": "ap-northeast-1d",

"Priority": 19.0

}

},

"Lifecycle": "spot",

"InstanceIds": [

"i-0021fb5c588e50edd"

],

"InstanceType": "t3a.2xlarge"

}

]

}

]

}